Abstract

Recently, the self-supervised learning framework data2vec has

shown inspiring performance for various modalities using a masked

student-teacher approach. However, it remains open whether such a

framework generalizes to the unique challenges of 3D point clouds.

To answer this question, we extend data2vec to the point cloud

domain and report encouraging results on several downstream tasks.

In an in-depth analysis, we discover that the leakage of

positional information reveals the overall object shape to the

student even under heavy masking and thus hampers data2vec to

learn strong representations for point clouds. We address this

3D-specific shortcoming by proposing point2vec, which unleashes

the full potential of data2vec-like pre-training on point clouds.

Our experiments show that point2vec outperforms other

self-supervised methods on shape classification and few-shot

learning on ModelNet40 and ScanObjectNN, while achieving

competitive results on part segmentation on ShapeNetParts. These

results suggest that the learned representations are strong and

transferable, highlighting point2vec as a promising direction for

self-supervised learning of point cloud representations.

Video

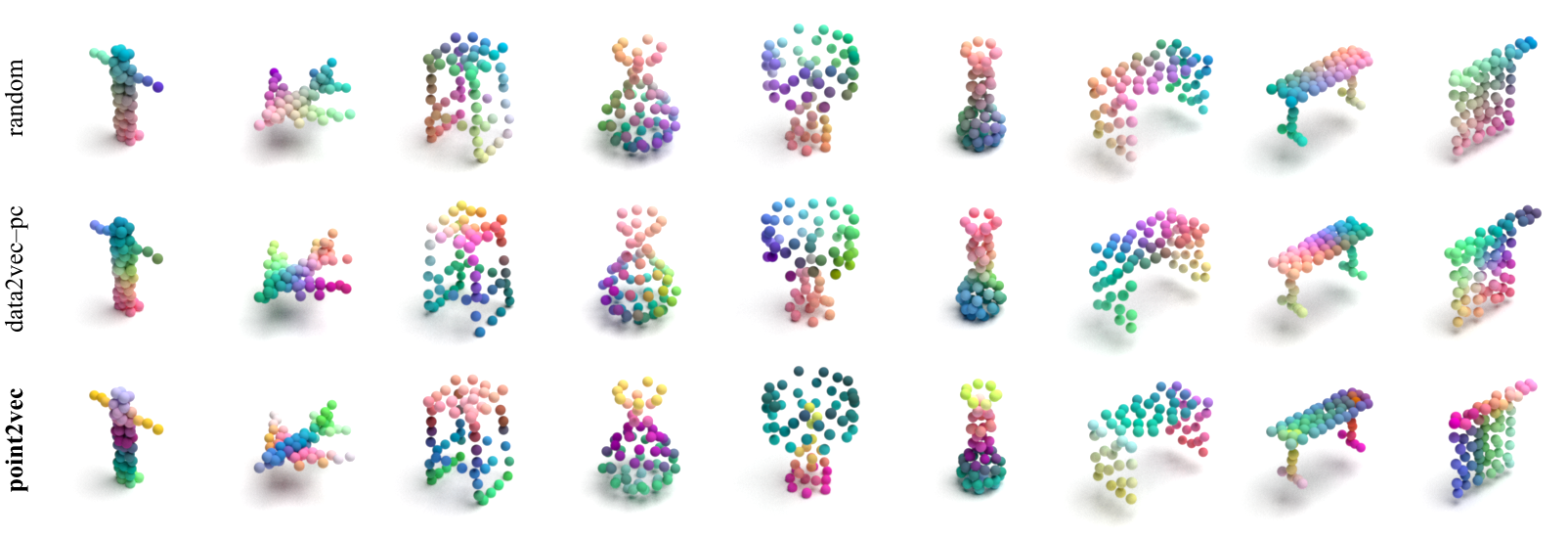

Visualization of Learned Representations

We use PCA to project the learned representations into RGB space. Both a random initialization and data2vec–pc pre-training show a fairly strong positional bias, whereas point2vec exhibits a stronger semantic grouping without being trained on downstream dense prediction tasks.

BibTeX

@inproceedings{abouzeid2023point2vec,

title={Point2Vec for Self-Supervised Representation Learning on Point Clouds},

author={Abou Zeid, Karim and Schult, Jonas and Hermans, Alexander and Leibe, Bastian},

journal={German Conference on Pattern Recognition (GCPR)},

year={2023},

}